Energy harvesting

You would have heard this several times already – “We have just one earth; we better take good care of her!” This slogan is becoming louder as the use of information technology and its paraphernalia of gadgets and machines increases. Of the many kinds of environmental damages that our tech toys cause, the power consumed by these devices is a constant worry for the environmentalist and the common man. While the former worries about the resources being depleted to produce this power, the carbon footprint of the devices, the heat released by them, etc, the latter worries about the sky-rocketing energy bills. Adoption of alternative power sources, such as solar power, can be a welcome relief to all concerned parties.

Fortunately, researchers and device manufacturers are making reasonable headways in the field of ‘micro energy harvesting’. Macro-level energy harvesting in the form of wind-minds, hydro-electric turbines, etc, have been around for a long time. Now, the trend is towards micro-level harvesting of energy from body heat, vibrations, sunlight, wind, and so on, to scavenge micro-watts of power to run ultra-low power devices.

This positive trend can be attributed to two reasons. One, there has been considerable progress in the field of alternate energy – the constantly increasing efficiencies of solar cells is a typical example. Two, thanks to superior design and engineering, current generation electronics consume much less power and are amenable to be powered by alternate sources.

Of late, several interesting gadgets are being featured in the media – solar cells to supplement mobile phone batteries, vibration-powered sensors (that are ideal for use in automobiles and other environments where there is a lot of jolting), solar-powered wireless sensor networks, wireless and batteryless switches for use in building automation, heat- or vibration-powered medical implants such as hearing aids and pacemakers that harvest bodily energy using micro-generators manufactured as micro-electro-mechanical-systems (MEMS), and so on.

In fact, an in-body micro-generator that converts energy from the heart-beat into power for implanted medical devices won the Emerging Technology Award at the Institution of Engineering and Technology’s (IET) Innovation Awards 2009 held in London, recently.

Improved interconnect technologies for 3d ics and 3d packages

The electronics industry continues to strive towards a common goal – to pack more functionality into smaller form factors. For this, the industry depends on constant reductions in the size of chip packages. Maintaining the tempo predicted by the famous Moore’s Law, the integrated circuit (IC) makers are constantly improving their fabrication processes and designs to fit more components into smaller footprints. However, the electronics industry is not satisfied with even that – they want more!

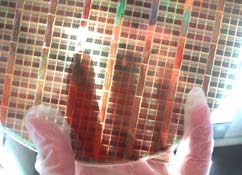

This has resulted in three-dimensional (3D) ICs and packages. A 3D IC is basically a stack of multiple silicon wafers and dies connected through vertical electrical connections, such as through-silicon vias (TSVs), in a way that they behave like a single device. Wikipedia defines a TSV as a “vertical electrical connection (via) passing completely through a silicon wafer or die.”

A step above, we have 3D packages, that is, interconnected stacks of two or more chips. The stacked chips may be wired together along their edges, but vertical connection using TSVs is now emerging as the more popular option since it does not add to the dimensions of the stack. There are several TSV designs and production technologies in use.

Of course, engineers are never satisfied – even as TSV is gaining popularity, next-generation technologies are already being demonstrated. One such wireless interconnect technology was described by Yasufumi Sugimori at the International Solid-State Circuit Conference 2009. The technology used coupled inductors to send signals between stacked die, across a distance of 120 µm. This coupling avoids the need for TSVs, saving the cost of this wafer processing step. According to Sugimori’s estimate, the power spent communicating through the stacked chips will only be half of what is spent in today’s stacked chip packages, while the area spent on communication circuits can be reduced 40 times.

Bioelectronics

This is the age of technological convergence – most significant breakthroughs happen at the interface of one or more fields of science. Such a conjunction of electrical and electronics engineering with biology, physics, chemistry and material sciences is going to be the future of healthcare. We are talking about such advanced technology that will make current equipment like cardiac pacemakers and blood glucose meters seem very simple!

‘Wetware’ comprising bioelectronics and biosensors will make it possible to measure and understand biological systems in greater detail and with unprecedented accuracy. This will result in advanced lab-on-a-chip biomedical diagnosis, implantable neural interfaces, the ability to restore a patient’s lost vision or reverse the effects of spinal cord injuries, etc. An EETimes.com report cites: “Lab-on-a-chip is one manifestation of the technology…but it is also possible to grow biological cells on electronically addressable substrates. The opportunities for in-vitro diagnostics are clear. Information about the electrical behaviour of individual cells and their reactions to drugs is a major focus for research in cardiac and neural ailments such as Alzheimer’s or Parkinson’s disease.”

Bioelectronics shows a lot of promise now, thanks to the rapid increase in the understanding of biological systems and processes at the nano-scale, advances in semiconductor technology and in surface chemistry related to the interface of biology and man-made devices, availability of micro energy sources, biosensors and such miniature measurement systems, and the materials and fabrication techniques for bio-electronic devices, medical implants, 3D assembly, self-assembly, nano-particles, nano-tubes, nano-wires, etc.

A report by the US National Institute of Standards and Technology (NIST) beautifully describes how it is not just a case of miniature electronics and MEMS enhancing medical treatments but also a case wherein the electronics industry learns from the ways and means of biological systems to enhance its own capabilities at the nano-scale. The 2009 report titled A Frame work for Bioelectronics: Discovery and Innovation states that: “understanding biology may provide powerful insights into efficient assembly processes, devices, and architectures for nano-electronics technology, as physical limits of existing technologies are approached… Advances in bioelectronics can offer new and improved methods and tools while simultaneously reducing their costs, due to the continuing exponential gains in functionality-per-unit-cost in nanoelectronics (aka Moore’s Law). These gains drove the cost per transistor down by a factor of one million between 1970 and 2008 (for comparison, over the same period, the average cost of a new car rose from $3,900 to $26,000) and enabled unprecedented increases in productivity.”

This win-win outlook will contribute further to the growth of bioelectronics. Several industry majors including IBM, Intel, Texas Instruments and Freescale are involved in the research and development of wetware. If there is as much development in the field as we expect to see, it will—over time, and as prices fall—lead to revolutionary changes in national security, healthcare and economic development too.

New Battery technologies

The electronics industry has been working towards the twin goals of reducing the size of devices and making them work for a longer time. Both goals are being achieved today mainly because of advances in IC technology – today’s chips are not only very small but are also designed to consume very little power, by reducing energy leakage, etc. As a result, the devices they are embedded in also have smaller size and a longer battery life.

Battery technology, on the other hand, has not kept pace with this development – batteries have neither become significantly small nor has there been any significant improvement in their capacity. This has been a much-discussed topic over the past few years.

The trend seems to be shifting, and we now hear of a lot of research and advancements in battery technologies. Perhaps over the next year, some of these might get incorporated into products, to result in cellphones, laptops and other devices with a much longer battery life and smaller footprint than the current ones.

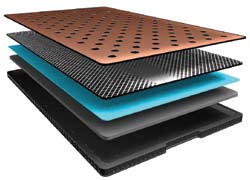

One notable development is the use of thin-film technology to make power sources, especially suitable for applications such as smart cards and sensors. These batteries can be flexible if fabricated on thin plastics. They charge fast and offer good energy and power densities in a small form factor. They also demonstrate very low equivalent series resistance (ESR). They are also tolerant to a wide range of temperatures. Since they do not self-discharge, they can be used for a period of around a decade or so. Companies like Oak Ridge Micro Energy and Front Edge Technology have commercialised thin-film power sources.

Then, there is a Swiss company called ReVolt that is trying to adapt the button-type zinc-air battery for use with larger devices like laptops and mobile phones. Since zinc-air batteries have a much higher energy density than the traditional lithium-ion batteries used currently with laptops, ReVolt’s ventures could result in a tripling of the battery life of such devices.

Plus, a look at current research hints at the possible replacement of the old-golden alkaline manganese dioxide-based batteries with newer nickel- and lithium-based battery formulas such as nickel oxyhydroxide, olivine-type lithium iron phosphate and nanowires.

Battery technology seems to be a happening area of research, and any significant breakthroughs will provide a good break for the electronics industry.

Tomorrow’s connected home

Bill Gates envisioned and implemented a smart and connected home many years ago, but in the next few years even our homes might become connected to a reasonable extent. The term ‘connect’ is now being used beyond the context of just mobile phones and computers. Numerous devices have the capability to connect to home wireless networks and the Internet – home theatre systems, gaming consoles, digital photo frames, cameras, printers, and more.

Several efforts are on, to take home networking to the next level.