According to a recent report in The Guardian, titled ‘Technology Makes Higher Education Accessible To Disabled Students,’ technology plays a huge role in bridging the gap between special and normal people. It cites America’s Student E-rent Pilot Project (STEPP) as an example. The programme offers e-textbooks specifically modified for accessibility. In a subsequent survey of 1185 students, 77 per cent reported having saved money by renting their textbooks, and 80 per cent who needed an accessible textbook were satisfied with the quality of accessibility.

This is just one example of how technology makes life much simpler, more exciting and normal for people with various disabilities. There are a variety of electronic aids like hearing aids, implants, prosthetic limbs, assistive canes and wheelchairs that help special people overcome their disabilities. Today’s operating systems are accessible to people with hearing, visual, comprehension and other impairments. Technologies are available that enable challenged people to play great music. Specially-designed user interfaces and devices help autistic children to use touchscreen-equipped computers. Advanced text-to-speech converters and a vast library of audio books enable visually-challenged people to complete higher education smoothly. Speech, auto-recognition and other technologies enable special people to use ATMs and other facilities in much the same way as others do.

Identifying the need, assessing the requirements in detail, developing suitable technologies and testing them is an intense task. It is quite different from developing normal technologies. Designers and developers of special technologies need to have an empathy and complete understanding of the requirements, behaviour and usage patterns of the people concerned. They need to keep the product cost-effective and simple. The product needs to be tested with the target users, with complete patience and understanding of their sentiments. Whenever possible, the product must also be adaptable to the needs of people with other kinds of impairments. (In fact, this is a consideration that developers of mass-market products should also keep in mind.)

Developing technologies for special people requires as much thoughtfulness and people skills as technical prowess. The results, of course, are worth the efforts, as such projects offer total 100 per cent personal satisfaction.

We salute the special people of this world, who march ahead, succeed and make things happen despite all odds. And, the technologists who help them do so. Whilst it is not possible to speak of all or even most of such technologies, here we have chosen and described a handful of them that seemed different.

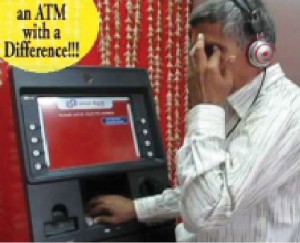

Voice-guided ATMs for the visually-challenged

“India has one of the largest visually-impaired populations in the world. The Reserve Bank of India, in its circulars in 2008 and 2009, stated that all banking services including ATM cards should be offered to customers with disabilities, and without any discrimination. NCR Corporation—India’s largest ATM manufacturer—wanted to reach out to this population and give them a chance to transact independently. Through research, NCR understood the banking challenges that a visually-impaired person usually faces and thereafter developed the Talking ATM—a customised ATM that will benefit such customers while also maintaining the safety of the transaction. We’ve been in constant discussions with the Xavier’s Resource Centre for the Visually Challenged (XRCVC) to identify the needs of visually-impaired people before launching the Talking ATMs,” says Navroze Dastur, managing director, NCR India.

impaired (Courtesy:

www.addressofwealth.com

The Talking ATM is also attractive to banks because they don’t have to issue any special cards—a regular ATM card will work on the Talking ATM too!

NCR’s Talking ATM uses a text-to-speech engine, which allows voicing-out the text on the screen in multiple languages for consumer convenience. This machine is incorporated with unique software and hardware features that ensure that a person with a disability can operate the machine on his own while maintaining the safety of the transaction. It comprises accessible keypads and Braille stickers too.

When a visually-challenged person attaches his headphone set to this ATM, he can hear the instructions, which enables him to fill-in the required data using the numeric keypad. Apart from reading aloud screen messages, the machine provides complete orientation, making it easy for the customer to use the machine.

An important security feature of this ATM is that it provides the person an option to blank out the screen as a safety mechanism to avoid shoulder-surfing by bystanders.

The system is completely Access for All (AFA) compliant, and also includes ramps for those with other physical disabilities. Banks can also make their existing ATM network AFA-compliant by customising the ATM software stack and upgrading the hardware configuration of the ATM fleet.

NCR has showcased its ‘Talking ATM’ at different workshops conducted by XRCVC over the last couple of years. “In June 2012, NCR got the order of a hundred Talking ATMs from the Union Bank of India for deployment in passport offices and other UBI branches across India. This shows that Indian banks and financial institutions are quickly realising the need to adapt self-service technologies to include millions of differently-abled people into the financial stream. NCR is committed to helping these institutions by providing innovative technologies, most of which are conceptualised, created and manufactured in India,” says Dastur.

These ATMs can be procured from, deployed and serviced by NCR. Any financial institution can upgrade its existing ATM to a Talking ATM by making minor changes in the hardware and software configuration, which is not very expensive. Thus the price of the ATM depends on the features added and the scale of the ATM roll-out.