The nature has provided us with such a beautiful optical system that it has no match so far. Our eyes with which we see the world individually form two-dimensional (2D) pictures and the brain processes this data and provides us the depth perception.

One-eyed persons also perceive depth as they use the other biological tools provided by nature, though overall they have monocular vision. Tools that enable depth perception basically include:

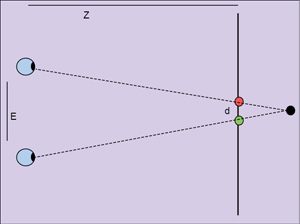

1. Binocular vision. The binocularity.org states that our two eyes form images of a point that are slightly separated, denoted by ‘d’ (Fig. 2), and the brain converts those two different images to a single image in depth.

From this we can conclude that if we have smaller eye separation, depth perception will be more.

2. Accommodation. It is basically the ability to focus on close as well as distant objects. In doing so, the shape of our eyes changes and thus provides us a clue of the depth.

3. Parallax. It is basically the apparent difference in the position of an object when viewed from different positions.

4. Size familiarity. By this, our brain approximates the distance if it is aware of object size. For example, take two pencils of the same size and place them at different distances. The one looking bigger will be closer. But our brain can interpret their sizes correctly.

5. Aerial perspective. This is basically related to contrast. Contrast is simply the difference in colours and brightness within the same field of view. The human eyes are more sensitive to contrast than to luminescence. As light is scattered by every object, a closer object would have a better contrast than a distant object.

These tools are used by our optical system simultaneously. However, due to limitation of technology, the 3D videos use only a combination of three or four.

Some eye problems and diseases prevent our eyes to perceive depth. These include, loss of vision in one eye, amblyopia (loss of eyes’ ability to see details), strabismus (when the two eyes do not line up in the same direction) and optic nerve hypoplasia (nerves not ending correctly).

The technology

In movies, the depth perception is achieved by colours, contrast, movement, perspective art and spatial presentation. The first 3D film was presented at Astor Theatre, New York on June 10, 1915.

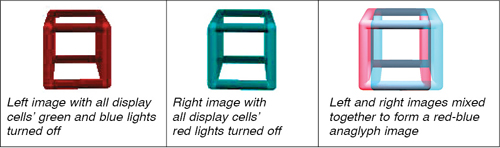

Earlier, methods used anaglyphs (wavelength-specific encoding) for stereoscopic 3D effect. The most common combination was red and cyan. The viewers were provided with eyeglasses having different wavelength-specific filters.

The filter colours have to be opposite on the VIBGYOR colour spectrum to avoid crosstalk between two independent images formed by the eyes. It was a convention to wear cyan (RGB:000,255,255) filter on the left eye and red (RGB:255,000,000) filter on the right. The filters allowed the wavelength opposite to the sheet colour to pass through them while the same wavelength was blocked. The anaglyphs basically used the stereoscopic vision that exploits the binocular disparity of our eyes.

<!–nextpage–>

Basic classification

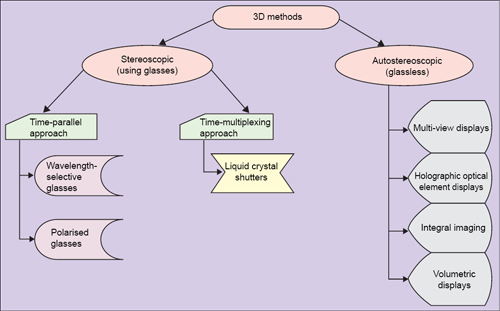

3D content can be created with glasses or without glasses. Stereoscopic methods use glasses for producing the cognitive sensation. These methods further use time-parallel or time-multiplexing approaches.

In time-parallel approach, the left and right views are displayed simultaneously. For this, the viewers must wear wavelength-selective filters or polarised glasses.

In time-multiplexing approach, there is an alternate display of left and right views. Both the views are stored in two separate frame buffers and the screen is refreshed alternately. For time-multiplexing, liquid crystal shutters are used.

Autostereoscopic method. With this method, one can project 3D content without glasses. This method comprises different displays that work on different principles such as integral imaging, holographic approach and use of voxels to project depth.

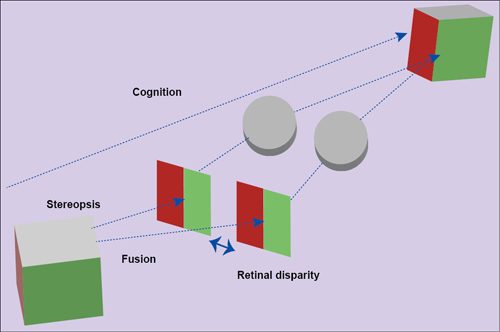

Stereoscopic method. The basic idea behind this is parallax. More the parallax, the more it creates stereoscopic sensation. Our binocular vision enables us to have horizontal parallax, that is, the ability to view an object in two different ways horizontally.

The inter-ocular distance between eyes is about 6.35cm (2.5-inch), which forces the eyes to form slightly different images of an object, causing retinal disparity. The human brain then combines these 2D images into one perspective image called stereoscopic image.

Wavelength-selective glasses—Anaglyphs. In 1858, Joseph D’Almeida projected a 3D magic lantern by using red and blue filter glasses. However, Louis Du Hauron created the first printed anaglyph using colour printing available at that time. An anaglyph is a type of 3D image created from two photographs taken approximately 6.35cm (2.5-inch) apart.

Disadvantages.

1. High crosstalk: Colour filters are not perfectly matched

2. anaglyphs lead to reduced perception of colours

3. Different people have different chromatic adaptation, which leads to eye irritation

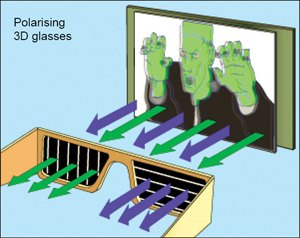

Polarising glasses. The idea is based on different polarisations of the two images. In this method, to overcome the reduced perception in anaglyphs, a projector projects two views onto the screen (having different polarisations).

But, for polarisation, we need to wear special glasses that would allow only one image into each eye. As the glasses contain polarised lenses, they would allow a specific orientation of light to pass.

However, the problem with this was that the polarised light from each row would create 1D for each eye, so the resolution would be half. The solution that came up was spatial multiplexing in which a checkerboard pattern in each pixel was used. The left pattern was opposite of right. This provided better sampling and thus better resolution than its counterpart.

LCD shutter glasses. This concept is based on real-time processing by the computer that provides control signals to the glasses.

In this case, the LCD and filters cause the lenses to lighten and darken at the refresh rate of computer. An IR sensor is used as an indicator for the glasses to judge the darkness and lightness of eye filters. This provides a much realistic image. The refresh rate is responsible for quality 3D.

For watching high-speed movies or sports in 3D, a refresh rate of about 200Hz is needed. Plasma TVs provide a very reliable option as they have a refresh rate of 600Hz. Many television and computer manufacturers are using this technique. The disadvantage is that all this depends on the refresh rate. Having a non-uniform refresh rate causes the screen to flicker, and thus very few monitors provide the required compatibility.

Autostereoscopic methods

The concept is based on glassless 3D. For this, there are two possible approaches:

1. Eye tracking. For creating 3D as per eye position, the camera constantly observes head position, so the screen can be mechanically shifted. One approach, for example, is two-view displays. But this approach is not successful as, in case of many users, it leads to flickering.