As higher targets are set to increase profits and decrease operating costs, test engineers are subjected to the ever-increasing stress of improving the efficiency of their testing operations and ultimately getting higher throughput for the same number of work hours. Read on to find out some simple ways to enhance your testing productivity

DILIN ANAND

JUNE 2012: Chips are becoming faster and more efficient keeping with Moore’s Law. This is pushing consumer electronics technologies to new heights while making them all the more complex. Smarter test equipment are required to keep up with the new technology. Also, test engineers need to improvise techniques in order to enhance the productivity of their operations. Here is how you can improve your test performance.

JUNE 2012: Chips are becoming faster and more efficient keeping with Moore’s Law. This is pushing consumer electronics technologies to new heights while making them all the more complex. Smarter test equipment are required to keep up with the new technology. Also, test engineers need to improvise techniques in order to enhance the productivity of their operations. Here is how you can improve your test performance.

Upgrade embedded controller, lower measurement time

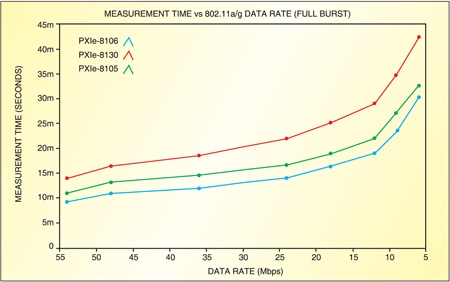

As Intel and AMD come up with newer microarchitectures on their processors, there is a definite impact on the system controller and, in turn, the testing performance and productivity. The just released Ivy Bridge microarchitecture—a 22nm die-shrink of the previous Sandy Bridge microarchitecture—brings in a plethora of new features that enhance computing performance.

If you go for an embedded controller that features a processor with a large cache, the controller wouldn’t have to access the comparatively slower DDR3 RAM for that data. The lower latency of the cache will, in turn, improve processor performance, delivering faster measurements and results. This is especially true for operations that require intensive signal and data processing.

“Especially for RF measurements and RF protocol testing, CPU performance is often the single most significant factor preventing faster measurement performance. While actual system performance can depend on a variety of factors such as memory available and other applications running in the background, a strong correlation exists between the CPU performance and measurement time for automated test systems,” explains David Hall, product manager for RF and Communications at the National Instruments Dev Zone.

If your system has a latest processor, you can further enhance the productivity of your test system. By smartly utilising multi-core processors in the test system, the testing time can be reduced by running multiple threads for each task. Tasks such as frequency analysis are heavily dependent on the processor’s computing performance for faster results. By optimising the algorithm to allocate each channel to multiple cores on the processor, you can improve the processor throughput. One of the reasons for the lower computing time is that the processor is able to execute Fast Fourier Transform (FFT) frequency analysis simultaneously on all cores.

Implement pipelining, improve throughput

Pipelining is a method used in a variety of fields to improve the throughput of an assembly-line-like system. It does not reduce the time to execute a test, but rather increases the number of tests that can be done simultaneously. More importantly, in pipelining, every test cycle takes as long to complete as the slowest cycle. But in most cases, the gain from implementing pipelining far outweighs the minor losses. Pipelining works best when each test takes almost the same time, as it minimises waste of time.

Pipelining works by delaying the start of testing each unit by one cycle. This delay allows later test sequences to run concurrently. Table I shows improvements in performance due to pipelining.

Re-order and schedule, maximise instrument utilisation

Once you have a faster processing and pipelining set-up, the next step is to improve instrument utilisation using test automation software. Even when you optimise your processor to improve upon the compute-intensive tasks, there is always a chance that your instruments will lie idle. To prevent such wastage of resources, auto-schedule tasks to run whenever the instrument is detected to be idling.

Not every set of tasks can be reordered. Move the tasks that can be reordered to fill in the idle instrument cycles. If you compare Table II and Fig. 2, you will notice that this methodology allows you to further enhance productivity by reducing the test process from five cycles to three.

“You’ve effectively reduced your test time by 66 per cent, and you’re making the most of your instruments by cutting instrument downtime to zero. The bottom line is that by taking advantage of the advanced parallel testing capabilities of NI’s software-defined automated test platform, you can use one set of instruments (that is, one test station) to test multiple devices in parallel. This, in turn, means you can test the same number of devices with fewer test stations, thereby drastically reducing the capital cost of test equipment without sacrificing test throughput,” explains Satish Mohanram, business development manager, National Instruments India.