The new generation of consumer electronics devices converge Internet connectivity, wireless communications, high-fidelity audio and HD video into a single device. To keep up with the times, different strategies have been adopted by test and measurement manufacturers and design houses. Take a look

DILIN ANAND

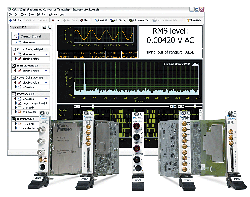

JULY 2012: 1. FPGA-enabled instrumentation

With the increase in system-level tools for field-programmable gate arrays (FPGAs) over the last few years, an increasing number of manufacturers are including FPGAs in instrumentation. What’s more, engineers are given the choice to reprogram these FPGAs according to their requirements. So test engineers can embed a custom algorithm into the device to perform in-line processing inside the FPGA, or even emulate part of the system that requires a real-time response.

Satish Thakare, head-R&D, VLSI division, Scientech Technologies, explains the traditional challenges that led to this trend: “Designers and manufacturers have to face a lot of challenges to make the product available in the market in a short time. Using a hardware-based approach does not serve the purpose as the designer has to redesign the hardware for every product. Even conventional methods will not serve the purpose as it works on the sequential method. So the designers need a kind of technology that allows them to change the functionality without changing the hardware while being able to upgrade the product on the go.”

Thakare goes on to explain the solution: “The obvious choice for the designer is to use reconfigurable hardware, i.e., FPGA. A benefit of using the FPGA in the instruments is that it offers high reliability, low latency, reconfigurability, high performance, embedded digital signal processor (DSP) core and true parallelism.”

Apart from digital functions, some FPGAs have analogue features. Some mixed-signal FPGAs may have integrated analogue-to-digital converters and digital-to-analogue converters.

Mahendra Pratap Singh, business development manager, TTL Technologies, adds, “Logic blocks can be configured to perform complex combinational functions and also include memory elements, which can be simple flip-flops or more complete blocks of memory. The architectural flexibility, customisation flexibility and cost advantage put FPGAs ahead of complementary technologies.”

The most common test instrument in the industry with this capability is the digitiser, which allows faster processing of digitised data.

2. Wireless standards outbreak

As new wireless standards like the WLAN 802.11ac, WiMAX, LTE and high-throughput 802.11ad roll out, it becomes even more challenging for test engineers in India and around the globe. Bharti Airtel has already launched its 4G service in Kolkata, making India one of the first countries in the world to commercially deploy this cutting-edge wireless technology. As RF and wireless applications expand to become general-purpose, the instrumentation segment might also begin to mirror this trend with the adoption of RF instrumentation to such a level that it becomes as important as our digital multimeters.

A common problem that test engineers face with the explosion of different standards is that they have to continuously set up different test platforms for each standard.

Sadaf Arif Siddiqui, technical marketing specialist at Agilent Technologies, provides more insight: “A test engineer working on fast emerging standards may have to bear the pain of setting up different test instruments and different test platforms or software. Moving to an easy-to-use, upgradeable and multi-standard vector signal analysis software and instruments such as X-series analysers will reduce this pain and test times to a large extent, thereby optimising the test time and costs.”

3. Increased use of wireless devices at the workplace

Tablet computers and smartphones have become so popular that they have a significant presence at the workplace too—not as devices under test but as part of the test system. While the computing power made available by these devices is notable, they cannot replace the PC and related measurement platforms like PXi. Instead, these devices are suitable for data consumption and report viewing, and system monitoring and control.

National Instruments’ Automated Test Outlook 2012 explains: “The explosion of mobile devices like tablets and smartphones provides compelling benefits to engineers, technicians and managers involved in automated test who need remote access to test status information and results. While today’s technology offers solutions for monitoring or remote reporting via mobile devices, test organisations will need new expertise to unite the networking, Web services and mobile app portions of the solution.”

4. Software-defined instrumentation

As the complexity of products continues to increase, their testing becomes much more challenging. Test engineers now require test systems that are flexible enough to support the wide variety of tests that must be performed on a single product while being scalable enough to encompass a larger number of tests as new functionality continues to be added.