In August 2013, computer scientists from Japan and Germany managed to simulate one per cent of human brain activity for a single second. To achieve this apparently simple task, they had to deploy as many as 82,000 processors. These processors were part of Japan’s K computer, the fourth most powerful supercomputer on Earth. The computer scientists simulated 1.73 billion virtual nerve cells and 10.4 trillion synapses, each of which contained 24 bytes of memory. The entire simulation consumed 40 minutes of real, biological time to produce one virtual second.

This shows the complexity and prowess of the human brain. It is extremely difficult to recreate human brain performance using computers, since the brain consists of a mindboggling 200 billion neurons that are interlinked by trillions of connections called synapses. As the tiny electrical impulses shoot across each neuron, these have to travel through these synapses, each of which contains approximately a thousand different switches that direct an electrical impulse.

Human beings have managed to automate increasingly complex tasks. However, perhaps, we have only seen the tip of the iceberg. While a large number of enterprises use information technology (IT) by way of thousands of diverse gadgets and devices, in majority of cases, it is human beings who operate these devices, such as smartphones, laptops and scanners. The intricacy of these systems and the way these link and operate together is leading to the scarcity of skilled IT manpower to manage all systems.

The smartphone has become an integral part of our life today as it often remains connected with our desktop, laptop and tablet. This concurrent burst of data and information and, further, its integration into everyday life is leading to new requirements in terms of how employees manage and maintain IT systems.

As we know, demand is currently exceeding supply of expertise capable of managing multi-faceted and sophisticated computer systems. Moreover, this issue is only growing with the passage of time and our increasing reliance on IT.

The answer to this problem is autonomic computing, that is, computing operations that can run without the need for human intervention.

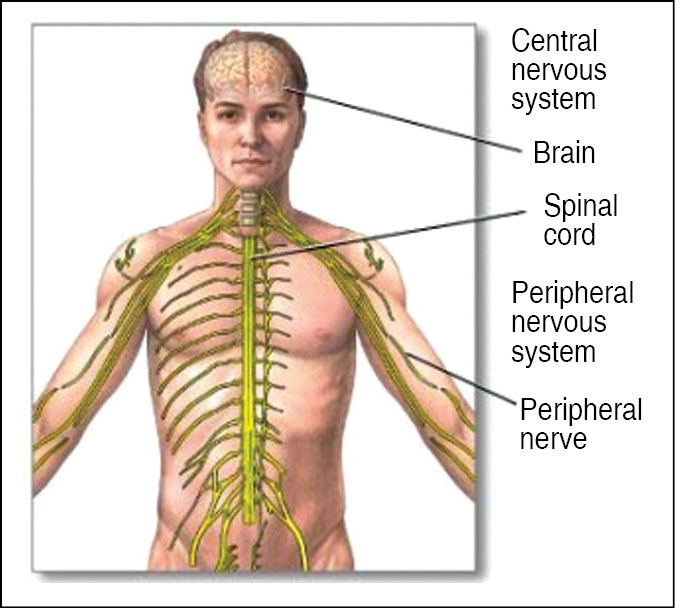

The concept of autonomic computing is quite similar to the way the autonomic nervous system (ANS) (Fig. 1) regulates and protects the human body. The ANS in our body is part of a control system that manages our internal organs and their functions such as heart rate, digestion, respiratory rate and pupillary dilation, among others, mostly below the level of our consciousness. The autonomy controls and sends indirect messages to organs at a sub-conscious level via motor neurons.

In a similar manner, autonomous IT systems are based on intelligent components and objects that can self-govern in rapidly varying and diverse environments. Autonomous computing is the study of theory and infrastructures that can be used to build autonomous systems.

In order to develop autonomous systems, we need to conduct interdisciplinary research across subjects such as artificial intelligence (AI), distributed systems, parallel processing, software engineering and user interface (UI).

Even though AI is a very important aspect for autonomic computing to work, we do not really need to simulate conscious human thoughts as such. The whole emphasis, today, is on developing computers that can be operated intuitively with minimum human involvement. This demands a system that can crunch data in a platform-agnostic manner. And much like the human body, this system is expected to carry out its functions and adapt to its user’s requirements without the need of the user to go into minute details of its functioning.