The 2008 terror attack on Mumbai’s Taj was fresh in everybody’s mind when budding engineers at M.V.J. College of Engineering were brainstorming over what to make for their college project. They decided to design a robot that could assist rescue teams in navigating more efficiently and with lesser risks in an emergency response situation. Thus a theory was developed, tested, and transferred to an autonomous surveillance robot.

The aim of their project was to build a robot that could perform various tasks in place of a soldier during indoor combat operations. It would incorporate video and audio surveillance, and transmit sensitive information to the base station. It would also be used to draw a map to provide the trajectory of its path and remotely acquire the data using wireless communication technology.

Indoor Navigation Robot

The work was divided among the group members according to each person’s area of expertise. Three of them worked on mechanical fixtures, two on programming, two on circuit design and one oversaw the entire project.

Also Read: Interesting Robotics Project Ideas

Hardware and Software Used

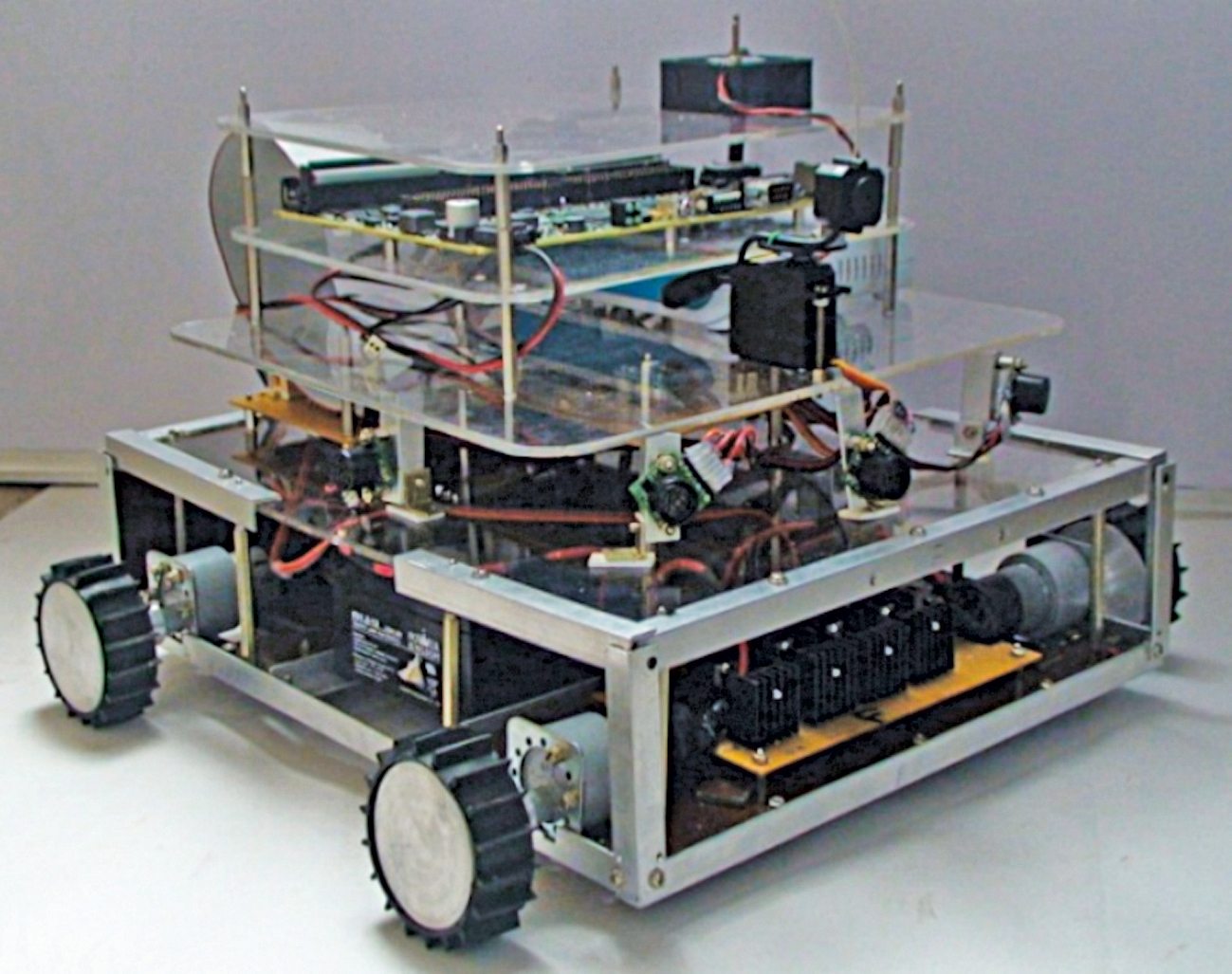

The robot has two layers—the base layer consists of a battery, motors and sensors, while the top layer consists of a multi-functional board, sensors, wireless router, camera and microphone. The device has open channels for 48 sensors and the prototype uses six at a given time.

The robot has two layers—the base layer consists of a battery, motors and sensors, while the top layer consists of a multi-functional board, sensors, wireless router, camera and microphone. The device has open channels for 48 sensors and the prototype uses six at a given time.

“Since National Instruments (NI) allowed us to use their hardware and software, we used the LabVIEW platform, which provided an amazing front-end user panel that could display the calibrated results in a graphical user interface termed as G-language,” shares team member S. Arun Kumar. The language enabled faster programming of the robot. With other languages, it would have taken much longer for creating pages of codes required for the device. The code written in LabVIEW could easily be deployed to the sBRIO-9642 hardware using WiFi connectivity.

Kumar adds, “The sBRIO multi-functional board allows manipulation of analogue and digital frequencies. We also used NI’s software like the NI Robotics module, NI FPGA and NI RT. A customized motor driver circuit was developed by the team to drive the motors. Since both the hardware and software were developed by NI, it allowed their easy integration.”

The team also designed a signal conditioning-and-filtering board, which can pick up signals from sensors and filter noise, etc. The design was highly flexible as it provided the base to plug in any sensor and obtain corresponding relative motions.

A group of students from M.V.J. College of Engineering participated and won National Instruments’ Yantra design contest. NI asked the group whether they would like to take up the challenge of designing an autonomous device for indoor navigation using its products in fifteen days. The students readily accepted the challenge and took it up as their college project as they had prior experience in creating robots.

With the guidance of their mentors Dr Krishnamoorthy, Kalyan Ram B. and V. Nalluswamy, the seven students—Rajshekar P., Gowrish B.V., Naveen Kumar S., Sandesh Nadiger, S. Arun Kumar, Panchaksharayya S. Hiremath and Vasanth Kumar K.—decided to design a vehicle for indoor navigation, with an option of plugging in sensors as per users’ requirement. The sensors can be developed by the user or purchased from NI.

Usability of the device

The primary function of the device is to navigate across a given territory in a fully-autonomous mode. However, if the user wants to shift to manual or semi-autonomous mode, it can be done using the arrow keys on a laptop, which is connected to the device through WiFi. The designed device has a maximum speed of 80 cm per second and it is capable of navigation inside buildings and various floorings. It also provides a platform for future development in navigation systems.

An obstacle-avoidance system based on sensors has been successfully incorporated into the device. It uses a MaxSonar sensor as the range finder and an NI Smart camera. The Parallax PING ultrasonic sensor detects objects by emitting a short ultrasonic burst and then listening to an echo.

Under the control of a host microcontroller, the sensor emits a short 40kHz ultrasonic burst. This burst travels through the air at a speed of about 344 meters (1130 feet) per second, hits the obstacle in front, and then bounces back to the sensor. The sensor provides an output pulse to the host, which terminates when the echo is detected. The width of this pulse corresponds to the distance of the object from the vehicle.

“We get this data from the hardware. This data is filtered for accurate values and then integrated with LabVIEW software to find out the vehicle’s distance from each obstacle, and also to determine when to start moving so that the vehicle does not disturb obstacles in any way,” shares Kumar.

What lies ahead

After passing out from college, four of the group members started Electrono Solutions – a design consultancy and automation solutions provider for the industry and academia. The organization is proposing the idea of using the prototype in educational institutes too. Students working on their projects can use it as a platform to test their solutions with algorithms and sensors. The team is also looking to provide entire solutions based on the prototype as per customers’ needs.

Future areas of development include picking garbage, assisting tutors in educational institutions by testing students based on algorithms, carrying patients from one ward to another in hospitals, and even researching unknown territories (space exploration).

For reading the latest innovation articles: click here

The author is from EFY Bureau, Bengaluru