How long can the industry rely on Moore’s Law? Today’s computers are rather too good at calculating stuff and can achieve anything that can be reduced to a numerical problem. But complex problems, which need a good amount of reasoning or those that need so-called ‘intuition,’ require too much programming and hence too much processing and power too. Just stuffing more transistors into smaller chips will take us nowhere. So, what next?

Seeking to move to the next new frontier of computing, research teams across the world are trying to move away from traditional chip designing methods and radically redesign memory, computation and communication circuitry based on how the neurons and synapses of the brain work. This will be a big leap in artificial intelligence, eventually resulting in self-learning computers that will be able to understand and adapt themselves to changes, complete tasks without routine programming and work around failures too. Such self-learning computers are commonly dubbed as ‘neuromorphic’ as they mimic the human brain. Here, we look at some of the significant strides in this direction.

Mimicking the mammalian brain in function, size and power consumption

One of the largest and oldest projects in this direction is the DARPA sponsored Systems of Neuromorphic Adaptive Plastic Scalable Electronics (SyNAPSE), which is contracted mainly to IBM and HRL, along with some US-based universities. The goal of the project is to build a processor that imitates a mammal’s brain in function, size and power consumption. Specifically, “It should recreate 10 billion neurons, 100 trillion synapses, consume one kilowatt and occupy less than two litres of space.” Since it started in 2008, the project has seen some interesting results.

The first breakthrough came in 2011, when IBM revealed two working prototypes of neurosynaptic chips. Both the cores were fabricated in 45nm silicon on insulator (SOI) complementary metal oxide semiconductor (CMOS) and contained 256 neurons. One core had 262,144 programmable synapses while the other had 65,536 learning synapses. Then came the Brain Wall—a visualisation tool that allows researchers to view neuron activation states in a large-scale neural network and observe patterns of neural activity as they move across the network. It helps visualise supercomputer simulations as well as activities within a neurosynaptic core.

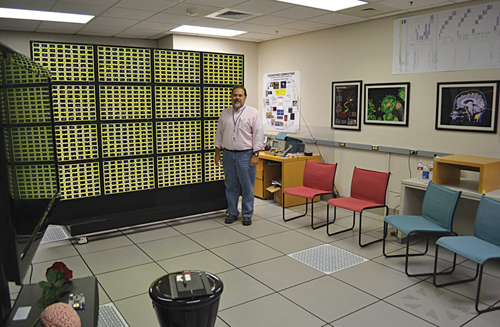

Meanwhile, in 2012, IBM demonstrated a computing system called TrueNorth that simulated 530 billion neurons and 100 trillion synapses, running on the world’s second-fastest operating supercomputer. TrueNorth was supported by Compass—a multi-threaded, massively parallel functional simulator and a parallel compiler that maps a network of long-distance pathways in the macaque monkey brain to TrueNorth.

Last year, they had more updates. IBM revealed that the chips are radically different from the current Von Neumann architecture based ones. The new model works with multiple low-power processor cores working in parallel. Each neurosynaptic core has its own memory (synapses), a processor (neuron) and communication conduit (axon). By operating these suitably, one can achieve recognition and other sensing capabilities similar to the brain. IBM also revealed a software ecosystem that taps the power of such cores, notably a simulator that can run a virtual network of neurosynaptic cores for testing and research purposes.

To make use of these neurosynaptic cores, IBM proposes a programming model based on reusable and stackable building blocks called corelets. IBM’s ultimate goal is to build a processing system with 10 billion neurons and 100 trillion synapses drawing just one kilowatt of power. Currently, IBM and Cornell University are working on the second generation of neurosynaptic processors, which will also emulate 256 neurons each, like the first attempt. But with a newly developed inter-core communication, the new processors are expected to contain around 4000 cores each, making a total of around one million neurons per processor.

A chip that can anticipate user actions

Last year, Qualcomm revealed a successful effort in this direction. Their Zeroth project was driven by an understanding of people’s changing expectations from mobile devices. In the future, people will want their devices to understand them and help them without anything being told. They will want to interact more naturally with their devices. “The computational complexity of achieving these goals using traditional computing architectures is quite challenging, particularly in a power- and size-constrained environment vs in the cloud and using supercomputers,” writes Samir Kumar, director–business development, Qualcomm.

Hence, they set out to create a new biologically-inspired computer processor designed like the human brain and nervous system so that devices could have embedded cognition driven by brain inspired computing. Eventually, Zeroth was born. Zeroth-based products will not just have human-like perception but also the ability to learn on-the-go based on feedback from the environment, like we do. They have also taught the processor to perceive things the way humans do, based on mathematical models created by neuroscientists.

These models accurately characterise biological neuron behaviour when they are sending, receiving or processing information. Qualcomm aims to create, define and standardise this new processing architecture, which they call the neural processing unit (NPU), so that it can be used in a variety of devices alongside other chips, so that a combination of programming, learning and self-correction will characterise future devices.

To demonstrate the success of Zeroth, last year Qualcomm built a robot using the NPU and taught it to do various things such as pick only the white from a selection of assorted boxes, sort and arrange toys in a kid’s room and so on, all by ‘teaching’ and not ‘programming’ it.

An analogue brain

While the previous two examples are based on ‘digital neurons,’ a neuromorphic system being developed by the University of Heidelberg as part of the European Union sponsored Human Brain Project, is based on analogue circuitry, which makes it closer to the real brain!

In order to understand the working of the brain, from that of individual neurons to whole functional areas, the BrainScales project takes three approaches: in vivo biological experimentation, simulation on petascale supercomputers and the construction of neuromorphic processors.

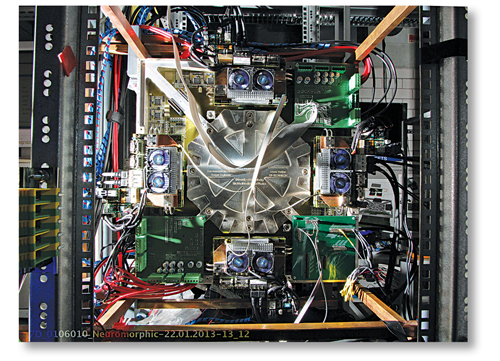

The neuromorphic processor constructed by them consists of a 20cm-diameter silicon wafer containing an array of identical, tightly-connected chips, with mixed-signal circuitry. While the simulated neurons are analogue, the synaptic weights and inter-chip communication are digital. Each wafer contains 48 reticles, each of which in turn contains eight high-input-count analogue neural network (HICANN) chips, making a total of 384 identical chips per wafer. Each 5×10 mm2 HICANN chip contains an analogue neural core (ANC) as the central functional block, along with supporting circuitry. In the current setup, each of the 384 chips implements 128,000 synapses and up to 512 spiking neurons, totalling to approximately 200,000 neurons and 49 million synapses per wafer.